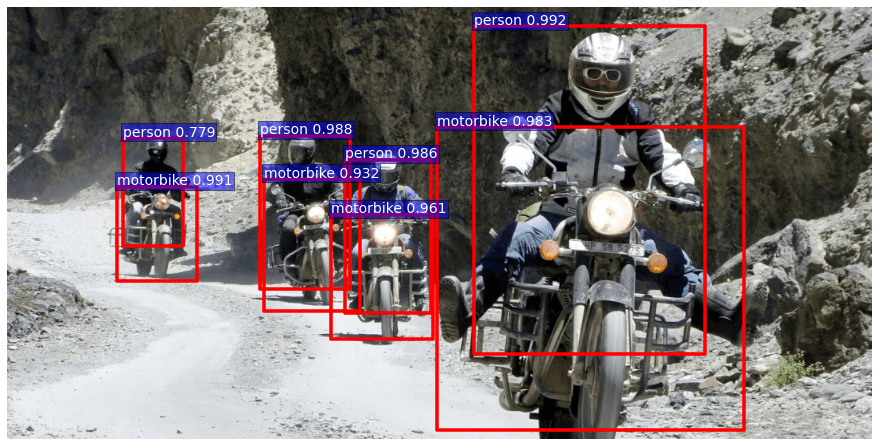

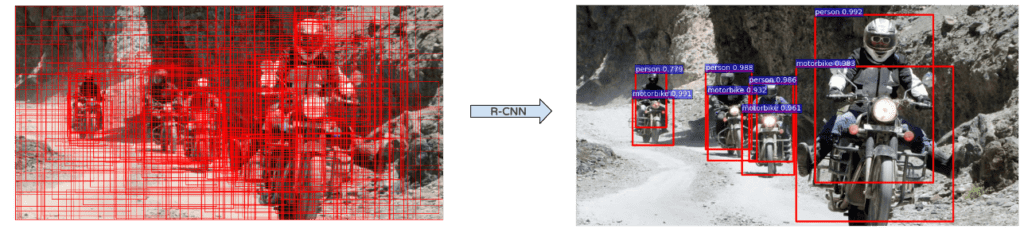

Recently, there has been a lot of improvements in the Artificial Intelligence sector thanks to Deep Learning and image Processing. It’s now possible to recognize images or even find objects inside an image with a standard GPU. Fig.1 is an example of what could be obtained in a matter of milliseconds. Let’s take a look at object detection!

In this article, the main focus will be the object detection algorithm named faster RCNN. However, in order to fully understand how it works, we will first go back in time and explain the algorithms which it was built upon. As it might take a while it will be split into two parts.

We won’t delve into all the tricky details or the underlying mathematics of theses algorithms, however a prior knowledge of the Convolutional Neural Networks theory (Convolution, Pooling, ..) would probably ease the reading.

So let’s dig a bit into the Deep Learning world !

Alexnet (2012)

We cannot talk about Deep Learning without mentioning Alexnet. Indeed, it is one of the pioneer Deep Neural Net which aim is to classify images. It has been developed by Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton and won an Image classification Challenge (ILSVRC) in 2012 by a large margin.

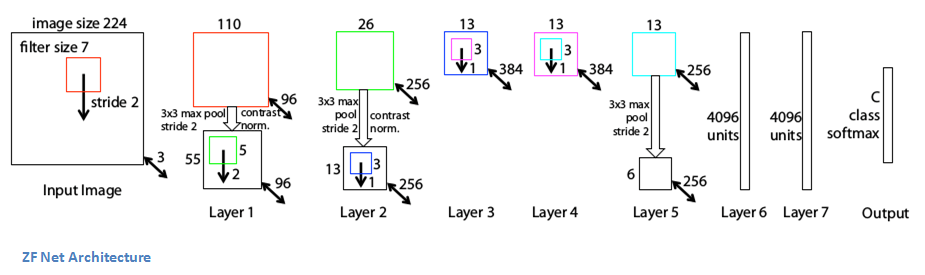

At that time the other competing algorithms were not based on Deep Learning. Now, and since then, they almost all are. This net had a huge impact on the domain and most of following nets were more or less based on its architecture. Alexnet (Fig.2) is composed of 5 convolutional layers (C1 to C5 on schema) followed by two fully connected (FC6 and FC7), and a final softmax output layer (FC8). It was initially trained to recognize 1000 different objects.

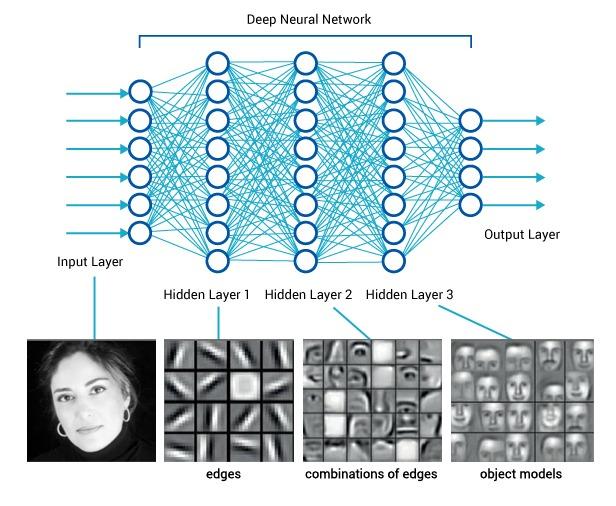

The intuition behind this net is that each convolutional layer learns a more detailed representation of the images (feature map) than the previous one. For example the first layer is able to recognize very simple forms or colors, and the last one more complex forms such as full faces for instance (see Fig.3).

The convolutional layers are representing the image in a much better way for the classification. After the convolutions, each image is represented as a vector of 4096 features (whereas they were initially vectors of 227*227*3 = 154 587 features).

The two fully connected and softmax layers are similar to a multi layer perception and could actually be replaced by other kinds of classifiers such as Random Forests or SVMs. However they are really important for the training phase of the neural net.

ZFNet (2013) and VGG (2014)

Alexnet is a very important network but the nets we are going to see aren’t actually built on it but on some of its descendents ZFNet and VGG. ZFNet has the same global architecture as Alexnet, that is to say 5 convolutionnal layers, two fully connected layers and an output softmax one. The differences are for example better sized convolutionnal kernels.

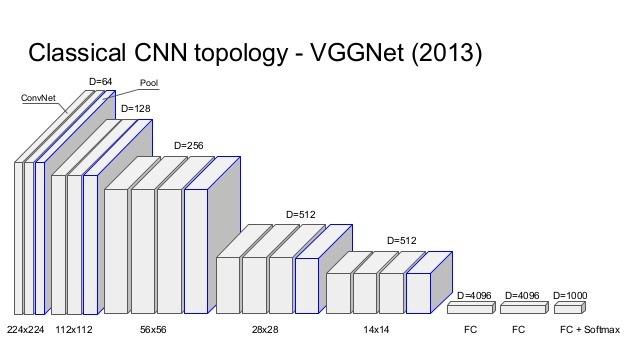

VGG is a very deep and simple net. In the most common version, it has 16 layers (The blue pooling layers aren’t counted on the schema). However the global architecture is very similar to the Alexnet one. Actually the Alexnet convolutionnal layers are here represented by two or three following convolutionnal layers. Another difference is that each convolutionnal layers has a 3×3 kernel unlike the other nets thats have different sized kernels for each layer.

The convolutional layers are representing the image in a much better way for the classification. After the convolutions, each image is represented as a vector of 4096 features (whereas they were initially vectors of 227*227*3 = 154 587 features).

The two fully connected and softmax layers are similar to a multi layer perception and could actually be replaced by other kinds of classifiers such as Random Forests or SVMs. However they are really important for the training phase of the neural net.

These important nets are built to classify images, that is to say to output a class when showed an image. This problem is quite well solved since today’s results beat human performances.

But let’s now focus on the main subject: Object Detection in Images. This problem is quite more difficult because the algorithm must not only find all objects into an image but also their exact locations. In other words, the algorithm should be able to detect that, on a specific area of the image (namely a ‘box’) there is a certain type of object.

RCNN (2013)

R-CNN was the first algorithm to apply deep learning to the object detection task. It beats the previous ones by more than 30% on the VOC2012 (Visual Object Classes Challenge) and was therefore a huge improvement in the fields of Object detection.

As previously mentioned, Object Detection presents two difficulties : finding objects and classifying them. That’s the point of R-CNN: dividing the hard task of object detection in two easier ones:

- Objects Proposal (finding objects)

- Region Classification (understanding them)

The Object Proposal task is an active research field and, in 2013, several algorithms were already performing well. We will not detail this task here but it is good to know that there are two main families of algorithms:

- The ones that group super-pixels (Selective Search, CPMC, MCG, …)

- The ones based on a sliding window (EdgeBoxes, …)

R-CNN is actually independent of the Object Proposal algorithm and can use any of these methods.

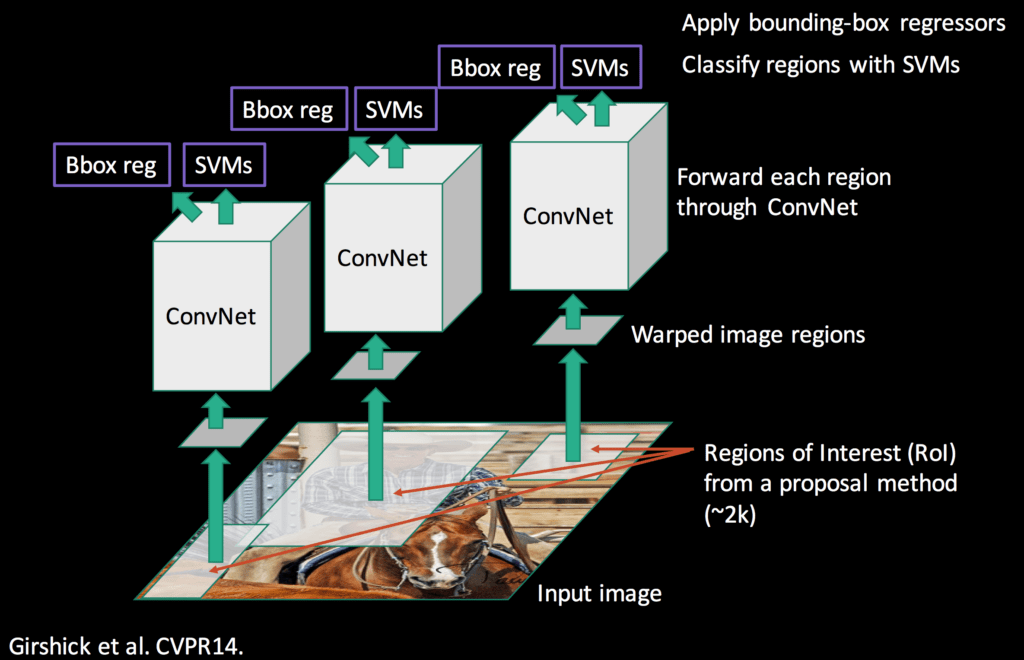

R-CNN takes as input the regions (or objects or boxes) proposals. Most Region Proposal algorithms output a great number of regions (around 2000 for a standard image) and R-CNN objective is to find which regions are significant and the objects they represent.

R-CNN gets parts of an image and must classify them. We saw earlier that Image Classification is a quite easy task thanks to Deep Learning nets such as Alexnet. That’s the point of R-CNN, using Deep Learning to classify each Region of Interest outputted by an Object Proposal algorithm.

R-CNN does not directly use an Alexnet on all region proposals because on top of classifying an image, the model should be able to correct the location of a region proposal if it is not right.

However, if you remember Alexnet, we saw that the fully-connected layers after the convolutions could actually be replaced by any other classifier. That’s exactly what is done in R-CNN. The convolutional part of Alexnet is used to compute the features of each region and then SVMs use these features to classify the regions. The advantage of this method is that the neural net (Alexnet) is already trained on a huge image dataset and is very powerful to feature engineer the region proposals. Before this SVM classification step, the neural net is fine-tuned to consider a new class “background”, in order to distinguish areas with or without objects.

Regions proposals, which are rectangles of different possible shapes, are transformed into squares of 227×227 pixels, the input size needed by Alexnet. They are then processed through the net and the values obtained on the last feature map are outputted. The region have then become 4096 feature vectors. These feature vector encode the images information in a much better way to process classification.

Then a one-vs-rest SVMs strategy is applied on all regions vectors. That is to say if the model is trained to recognize 100 classes, then 100 binary SVMs will be processed on each region. By keeping the best score among all binary classifier, we get the corresponding detected object class (or background actually). Even if the classifiers are all able to recognize only one class among the others, the results are good because the features extracted from Alexnet are shared among all classes.

There is then a step of regression of the bounding boxes in order to correct location of region proposals that were not good, for example if the box is not well centered on the object or not of the good ratio. This regression phase outputs correction factors to the coordinates of the bounding box. For this task, class-specific linear regressors are trained on the feature maps to predict the ground truth bounding boxes.

Eventually, there is a mechanism to keep only best regions. If a region overlaps with another one of the same class with more than a certain percentage (around 30% works quite well), only the better scored region is kept. This allows to keep a fairly reasonable number of regions.

That’s it for the R-CNN. This algorithm is powerful and works very well but it has several drawbacks:

- It is very long. The region proposal takes from 0.2 to several seconds depending on the method, then the feature extraction and classification again takes several seconds. It can go up to a minute on a CPU for one image.

- It is not a fluid algorithm. There are three differents steps that are almost independent and thus need a separate training.

SPP-NET

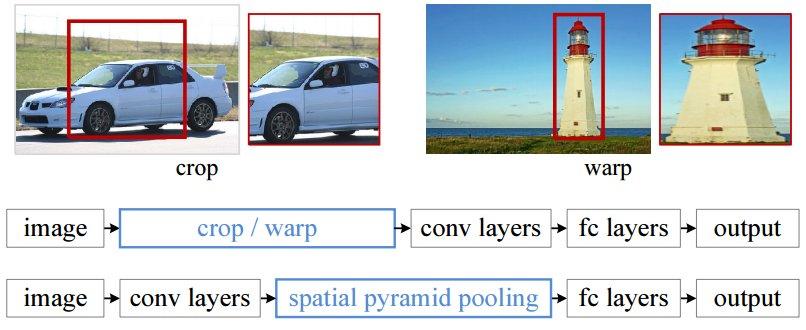

In the RCNN, each region proposal has to be inputted in a net with a fixed size (227×227 for Alexnet). That is to say every region must have the same dimension. This is a problem because obviously images and regions could be of all sizes and ratio. So, some transformations need to be performed on images to put them in the right format. Two of the most common transformations are cropping the image (only selecting a right-sized part of the image) and warping the image (changing the ratio). Both theses techniques have obvious drawbacks and might change the image in a way that will decrease the detection accuracy.

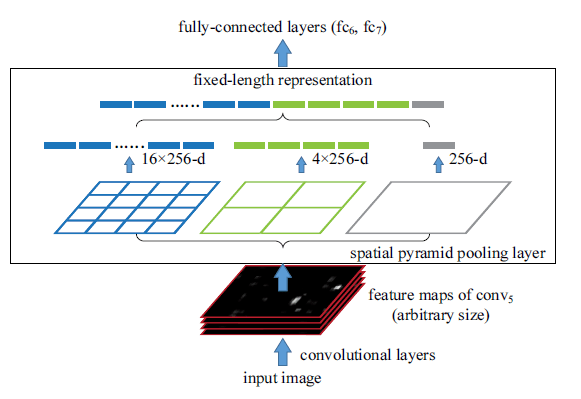

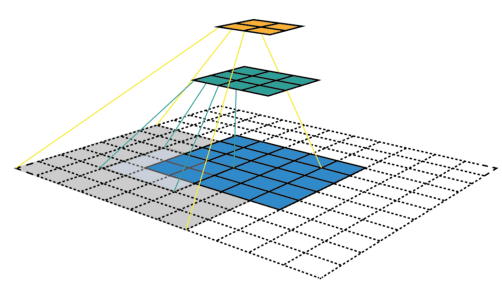

But if we look in detail at neural network layers, we can see that convolutional layers do not actually need a fixed-size input, only the fully connected layers do. Those layers are deep in the net, so there is no reason to fix the input of the net when it can be done just before the fully connected layers. That is what SPP-NET is about, they introduce a new type of layer named Spatial Pyramid pooling, placed after the convolutional layers and before the fully connected ones. This layer pools the last feature map in a way that will generate fixed length vectors for the fully connected layers. With this Spatial Pyramid pooling (see Fig.8) there is no need to warp or crop the inputted images.

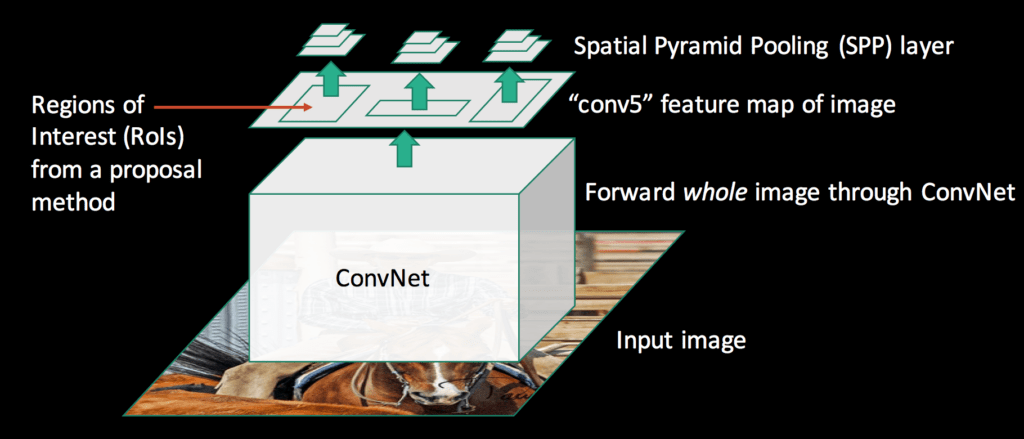

But now, you may be wondering what this method has to do with our Object Detection subject. Actually, there is a direct link: R-CNN is very time-consuming because the features inputted to the SVM are independently calculated for each region proposal. For instance, if 2000 region proposals are extracted, they will be process in an Alexnet to compute their feature map one at a time. Instead, with the proposed method in SPP-Net, convolutional layers are only computed once for the entire image (and not for every region proposal). Each region proposal location is then mapped on the whole image feature map and length-fixed features are extracted from this feature map with the Spatial Pyramid pooling layer.

In this layer, the number of bins of the pooling is fixed without regarding the size of the image, whereas in normal pooling, the size of bins are fixed but not their numbers. In a Spatial Pyramid pooling layer, the size of the bins depends on the image size.

It is called a Pyramid Pooling because several pooling with different numbers of bins (so different ratios) are done in parallel (see Fig.10). For example, on the schema a pooling is done on the full image (1 bin), one is done on one quarter of the image (4 bins) and one is done on 1/16 of the image (16 bins). The results of those pooling are then simply concatenated in a vector. The idea behind the Spatial Pyramid Pooling is Spatial Pyramid Matching which is a method that was widely used in Image Recognition tasks before the emergence of Deep Learning. It is able to handle various scales, sizes and aspects ratio, which is very important in Object Detection.

Then, when the region proposal features are extracted, an SVM classification and a bounding box regression are performed on each one, the same way it is done in RCNN. However, the full process is 10 to 100 times faster at test time and 3 times faster at train time.

This algorithm still has several drawbacks:

- It is still not a fluid algorithm. There are again three separate training steps.

- Unlike R-CNN, the fine-tuning algorithm cannot update the convolutional layers that precedes the spatial pyramid pooling. This limitation (fixed convolutional layers) reduces the accuracy of very deep networks. For nets like Alexnet the accuracy is still very good, but for nets such as VGG16 the accuracy may drop down.

In the Next part, we will focus on fast-RCNN and on the algorithm that really produced the first image of this post : faster-RCNN.

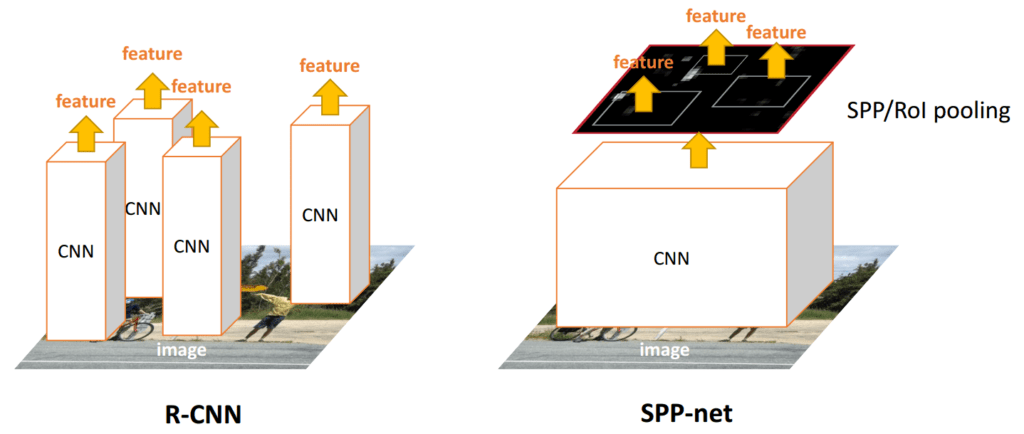

In the first part of this article, some very important deep Neural networks (AlexNet, VGGNet) and their use in an Object Detection task were the main focus. The algorithms presented were R-CNN and SPP-Net. Here is a reminder of their functioning (Fig.1). The main difference between the two is that with R-CNN convolutional features are computed for each region proposal which is expensive, while with SPP-Net it is done only once on the whole image.

However, both algorithms were multi-staged: assuming that region proposals were given, a first stage was to extract features, and a second one to classify the regions and refine their location.

Fast – RCNN

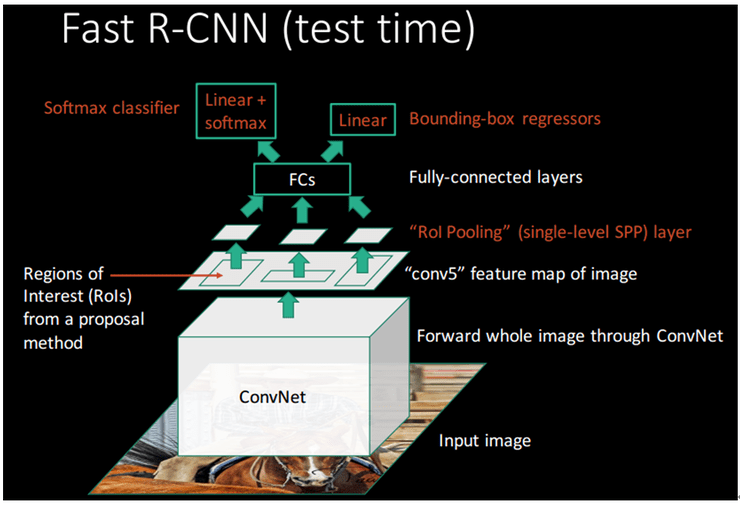

The main goal of Fast-RCNN is to improve these two algorithms by extracting the features and making an end-to-end classification with a single Algorithm. It is easier to train, faster at test time (the classification phase is almost real-time) and it is more accurate. However, this algorithm is still independent of the box proposal algorithm. So regions are still proposed apart, by another algorithm.

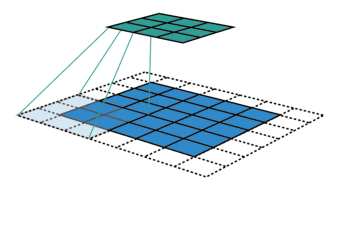

The principle here is similar to SPP-Net: the convolution map will only be computed once for the whole image and then each region proposal will be projected on it before going through a specific pooling layer and fully connected ones.

Like in SPP-Net, the specific pooling layer is used to produce a fixed size vector just before the first fully connected layer. This layer is called the RoI (Region of Interest) pooling layer and it is actually a special case of the SPP-Net spatial pyramid pooling layer with only one pyramid level. Keeping only one pyramid level makes the network much easier to train compared to SPP-Net (it is easier to compute its derivative during back propagation), allowing fine tuning of the convolutional layers.

The difference with SPP-Net is that following the fully connected layers, the network is divided into two outputs layers: this first one will do a classification (thanks to a simple softmax layer with N+1 outputs: N object classes + a background one) and the other one will do a regression over the four coordinates of the bounding box. The two separated training phase of the R-CNN (SVM classification and linear bounding box regression) are now inside the network itself.

Deep Convolutional Dive in Object Detection

To understand the process a bit better, it’s interesting to plot visually what is going on with the RoI pooling layer during a forward pass. However, to get things clear let’s first focus on the convolutional layers functioning:

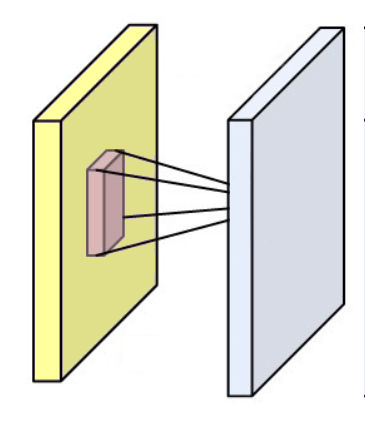

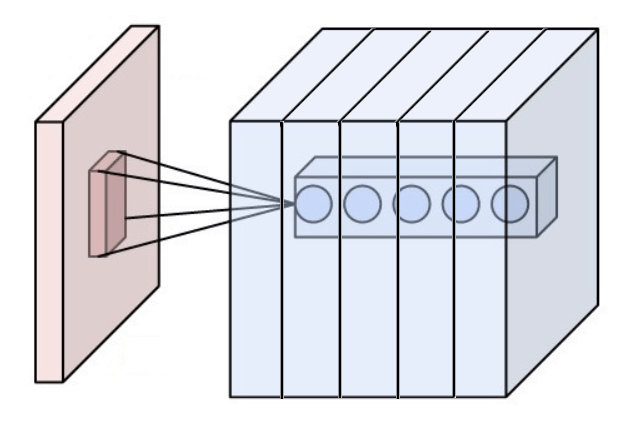

A convolutional layer is composed of weights gathered as a kernel (or a filter) and it produces a convolutional map (or feature map, activation map) by convolving this filter across the dimensions of an input (an image or the output of another convolutional layer) as you can see in the figure below.

Some more examples with the input image in blue, the convolving 3*3 kernel in dark blue and the convolutional map in green. On the right animation, you can see the weights of the kernel in the bottom right of the cells.

For example, if a convolutional layer has a kernel of size 3*3, each entry of the convolutional map will be calculated as the dot product of 9 pixels values with the weights of the kernel. In a classic RGB image, a pixel is defined by three values. Each entry would actually be the sum of 27 weighted values (9 pixels * 3 colors values). Depending on the size of the kernels and on the way it is convolved across the input, it is possible to vary the size of the convolution produced map.

The group of pixels used in the input image to produce one entry on a convolutional map is called its receptive field. So an entry actually contains a bit of information from all the pixels composing its receptive field. For the first convolutional layer the receptive field is actually as large as the kernel. However, for deeper layers an entry might have a receptive field that takes half the pixels of the image for example.

Receptive fields of different layers. The receptive field of the first layer (in green) is of the same size as its kernel while the receptive field of the second layer (in yellow) is way larger and actually covers more than a quarter of the image.

When inputted in a neural network, an image will successively pass through several convolutional layers. As we saw, convolutional layers actually gather the information contained by several pixels together. The convolutional neural networks principle is that each layer will project its input on a feature map of decreasing size. This way, the pixels information will actually be compressed more and more by each layer.

In neural networks compression, it may be seen as generalization: as the network gradually compresses the information, it is able to find pixels patterns that are similar in different images. If a specific pattern is present in several images of the same object, it might be an indicator that this image represents the same object as well.

The patterns found by the successive layers are increasingly complex because the receptive fields of entries on the feature map gets bigger for each layer. For example, the first layers might find simple color or shape patterns while deeper layers might find complex ones like a wheel or a helmet. The neural network will then use these patterns presence information to make the classification.

Compression allows generalization, however, in the end the neural networks still needs enough information in its fully connected layers to be able to take meaningful decisions. That’s why a convolutional layer does not output only one convolutional map, but several. Thus, a convolutional layer is actually composed of several kernels. Each kernel will search for different patterns in the image.

In a typical network, the successive convolutional layer outputs an increasing number of convolutional map to “counter” the compression and keep enough information (For instance, a convolutional maps of size 32*64 would not be enough to encode the information contained in a 900*450 image, but some hundred of these maps might).

During the training, the weights in the kernels are modified and learnt in order to detect specific patterns. Those patterns are determined by the training dataset and the problem to solve and they become increasingly complex the deeper we go.

However a complex pattern can be seen as a combination of more simple ones. Actually this is how we think human babies are representing and learning things (composing what they already know to understand new things). This explains the layered layout of neural networks: the first convolutional layers find simple patterns and by aggregating and combining those simple patterns, the following layers can find more complex ones.

This is one of the reason of Deep Learning generalization success: the first layers are able to learn general rules that are not specific tasks and thus can be used for other things. This explains the effectiveness of fine tuning and transfer learning (Using a neural network trained on a specific task and modifying it just a little bit to accomplish a different task). When a network is fine tuned, we base on its trained general knowledge and a little bit of task-specific understanding is simply added.

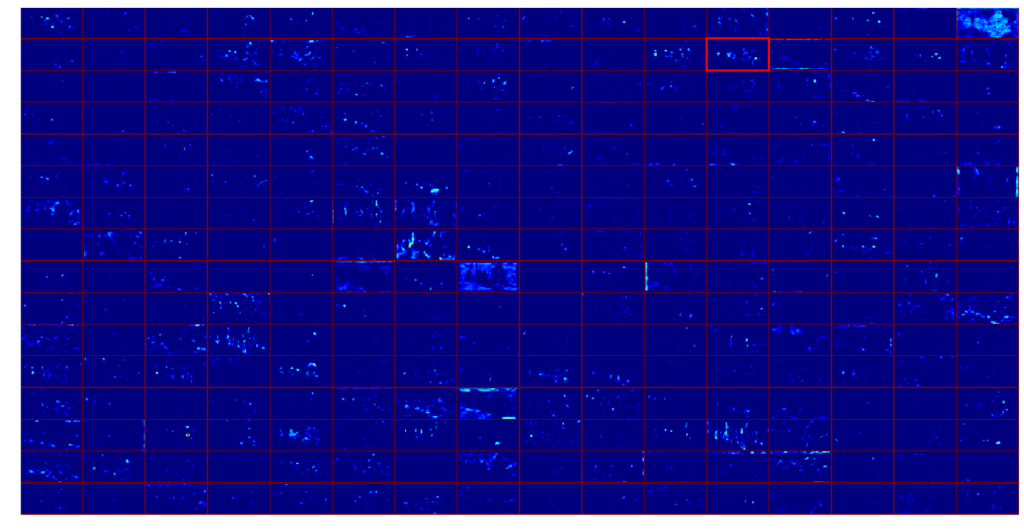

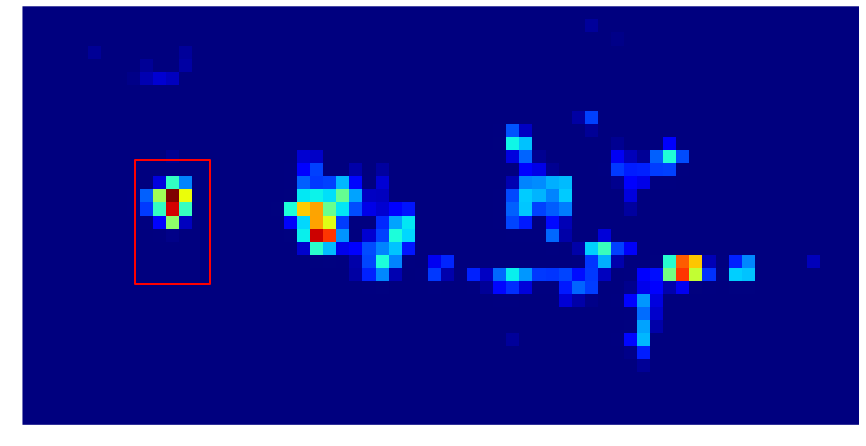

Now it is enough about the theory, let’s see how things are going when our image is processed through our network. We will focus on one region proposal (in red).

Let’s first take a look at the output of the last convolutional layers. The feature map is composed of 256 convolutional maps of size 32*64 that are represented here as a 16*16 matrix, each box being a convolutional map.

As it’s not really enlightening to show all these convolutional maps at once, let’s focus on only one, the one highlighted on the feature map

On these features maps a red pixel indicates a high value and a blue pixel a small value, following this colormap :

A high value on the feature map is what we call an activation. An activation says that a useful pattern for the classification is present there. As a reminder, this pattern is “described” by the successive kernels of the convolutional layers.

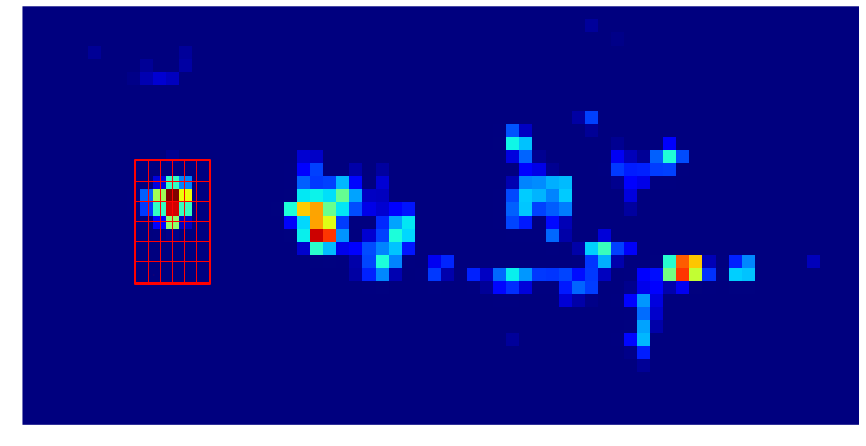

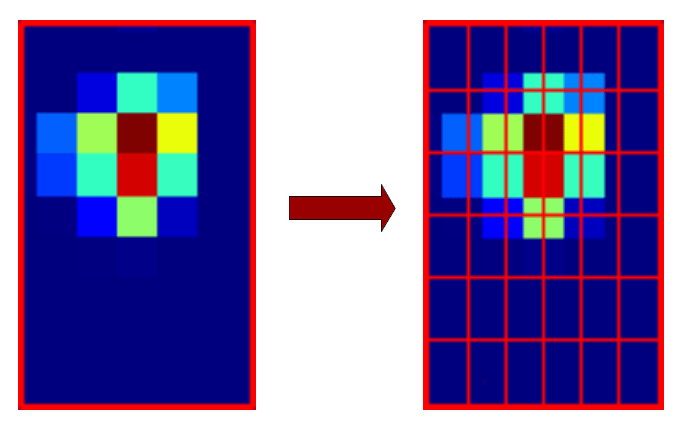

So, this activation map describes the presence of a particular pattern in the whole image, but in Object Detection we are only interested in the sub-image representing our region proposal. We can actually project this region proposal on the convolution map and then only focus on the projected part.

In order to get fixed size information for the following layers of the net, the RoI pooling layer will cut the region proposal into 6*6 smaller regions. Like in SPP-Net, these regions aren’t fixed in size, they actually depends on the region proposal size.

Something like this should be obtained:

Then for each region, only one value is kept thanks to a max pooling. This gives us a 6*6 matrix (shown here as an image)

This 6*6 matrix is then flattened into a vector of size 36.

This vector represents the information for one of the 256 activation maps. The corresponding entries on all the activation maps contain information about the same region of the input image but compressed in different ways, so they are all useful. That is why this step is done for all the 256 activation maps.

We then get 256 vectors of size 36 that are simply concatenated into a big vector (256*36 = 9216 features). This vector contains all the information the convolutional layers find relevant for the classification. The rest of the network will then use this vector to take its decision.

The fast-RCNN network will finally output the class of this region proposal (a motorbike) and possibly an offset to the bounding box coordinates.

Faster – RCNN

With Fast-RCNN, real-time Object Detection is possible if the region proposals are already pre-computed. However this task may take from around 0.2 seconds to one or two seconds for one image depending on the method.

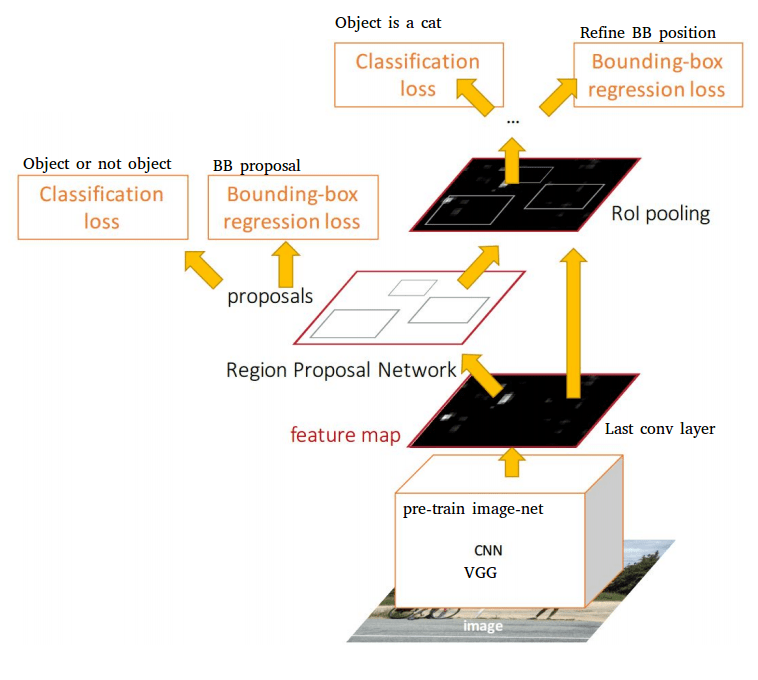

This is the main point of Faster-RCNN: making the region proposals algorithm as a part of the neural network. It merges Fast-RCNN with a Region Proposal Network producing better object detection results and in real-time (hence the name :)) for the first time. Moreover, the results are not dependent on the accuracy of an external region proposals algorithm anymore.

Like Fast RCNN, a faster RCNN network may be built upon different already existing networks. Some modifications have to be made, but only after the convolutions layers. Two branches will follow these layers:

- A first one, the RPN (Region Proposal Network) that will produce the region proposals based on the last feature map.

- A second one, the fast RCNN network that will classify the RPN proposals

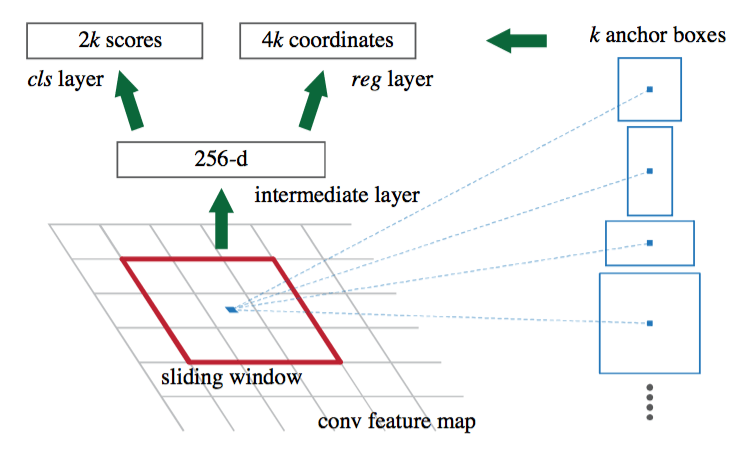

The RPN network is as a full convolutional net that will slide on the feature map and that will tell for each position if there is an object or not (with probability and potentially bounds offset), without regards to the class of the object.

In order to have a system that is robust to translation and scale, the RPN uses an algorithm based on anchors. For each position of the sliding window on the feature map, 9 anchors are placed. The anchors are all centered on the sliding window, only their scale and ratio change (There are three scales and three ratios (1:1 , 2:1 and 1:2), making the 9 anchors.

Each anchor is processed through the convolutional layers of the RPN and the networks output the probability that this anchor represents an object and potentially an offset to correct the anchor dimensions. For example, if the object is very long, a 2:1 ratio might not be enough to cover it, so the RPN will output offset to change the anchor ratio.

If the feature map on which the sliding window is applied has a height H and a width W (For our motorbike example, H = 32, W = 64), there are H*W*9 anchors produced (18432 anchors) and tested (processed by the convolutional layers of the RPN). On a classic object image, usually around few thousands are considered as representing objects (and the others are considered as background) and only the top N are kept (Based on classification probability). It was tested experimentally that keeping around 300 proposals gives good results. The second part of the net is a classic Fast-RCNN. It will process the 300 proposals provided by the RPN to extract a fixed length vector of the feature map and classify the object.

A faster RCNN is a complex network and the training is not an easy task. There are several tricks and methods used for the training that won’t be detailed here except for one as an example: In order to train the RPN, ground truth anchors must be generated to be compared with the anchors produced by the RPN, so that the network learns if the anchors he is producing are good or not.

To use object detection for our bike image that would mean to generate 18432 ground truth anchors which is expansive. That’s why only a random subsample of around 250 anchors are generated for each image. However, the number of negatives anchors is way above the number of positives ones in a normal image. So the subsampling is actually built to have about half positive and half negative anchors. Without this step the RPN would be biased toward negatives anchors as they are way more represented. This is an example of a method that is needed for the training but there are others and they need to be done on the full training dataset.

Two methods are discussed in the paper to train the full faster-RCNN network. The first one was made because of a short deadline and no theoretical assumptions that the other methods would actually work. The second method actually works even if it is based on an approximation and it is faster and easier to train with similar results.

- Alternating training: In this method, the RPN is trained first, followed by the Fast-RCNN that is trained with the proposals. Then the Fast-RCNN is fine tuned by the RPN and as a last step the RPN is fine tuned by the Fast-RCNN. This is not a simple pipeline and you need to run several trainings which is not the best. This training method is actually a way to fake a single net but the RPN and the Fast-RCNN are actually separated.

- Approximate joint training: In this method, there is really only one single net. For the optimization in the shared layer, both the RPN and fast-RCNN errors are combined. This method is called approximate because during the learning, a calculation simplification is performed (a part of the derivative is ignored during the backpropagation).

We mainly discussed Fast-RCNN and Faster R-CNN as based on AlexNet or VGG but they are actually now based on more recent Deep Learning Network which makes their accuracy better and better. For example in the recent Tensorflow Object Detection API, you can find a Faster RCNN based on ResNet and another one based on Inception ResNet v2. Those two algorithms are recent deep neural networks performing very well in Image Classification tasks.

Object Detection algorithms continually improve with the Image Classification deep neural networks they are based on. However, object detection is still an active search field and new algorithms are also being created regularly. Here are some of the best performing new methods :Yolo, R-FCN, SDD.