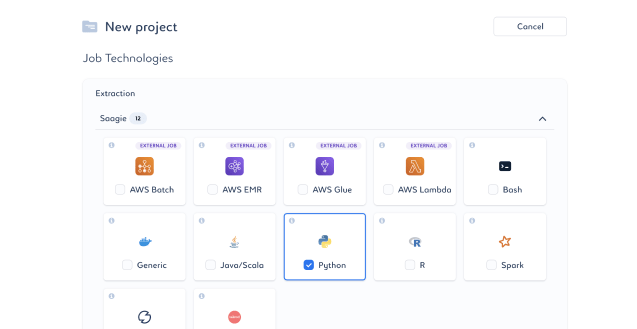

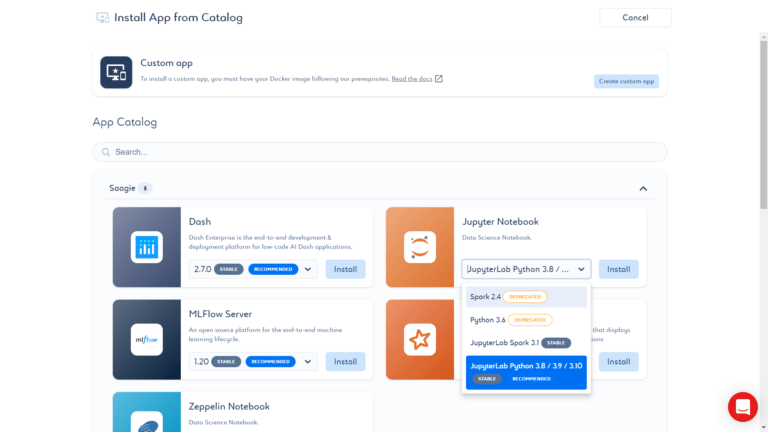

Technologies and Apps

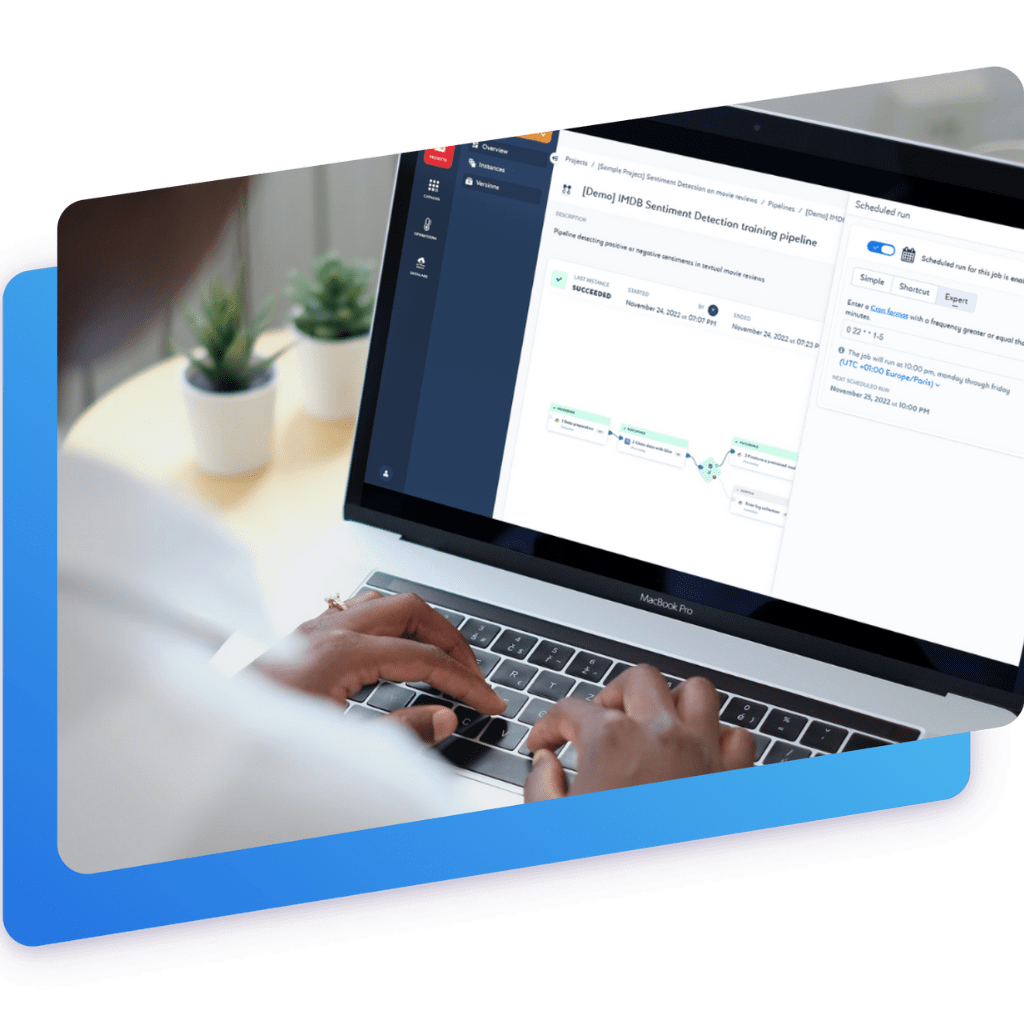

Access a catalog of technologies to perform your processing through jobs, executed on the Saagie cluster or on your own infrastructure. Within this catalog, take advantage of a repository of technologies in a multitude of execution contexts, made available and maintained by Saagie. Create your own repositories to add any technology from the data market yourself using the Saagie SDK.

Filtres

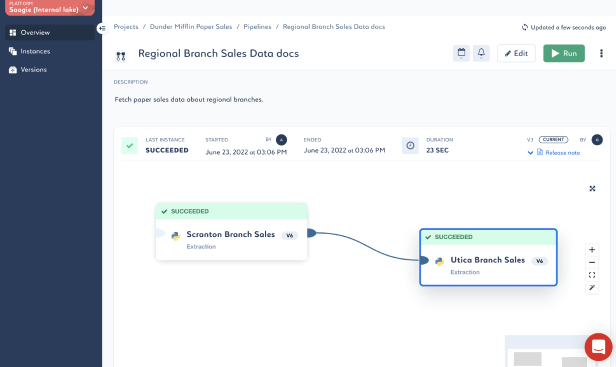

Discover the incredible features

of Saagie

A question about the company or our solutions?